Data scraping services that help you extract data from websites quickly and easily, so you can focus on data analysis. Affordable and scalable. Contact us today

In today’s data-driven world, efficient data analysis plays a pivotal role in gaining insights and making informed decisions. One crucial aspect that empowers data analysis is data scraping. By extracting relevant information from various sources, data scraping helps in maximizing the efficiency of the analysis process. This article aims to delve into the intricacies of data scraping, exploring its benefits, key factors, professional services, best practices, ethical considerations, challenges, and future trends.

Table of Contents

Understanding Data Scraping

What is data scraping?

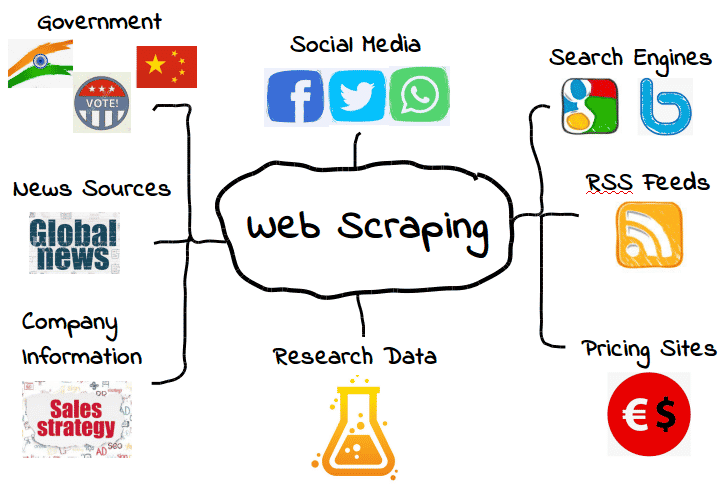

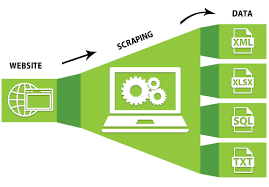

Data scraping, also known as web scraping, is the process of automatically extracting information from websites and transforming it into a structured format that can be utilized for analysis. Through the use of specialized software tools and algorithms, data scraping enables the extraction of valuable datasets from multiple web pages efficiently and in a systematic manner.

Benefits of utilizing data scraping for data analysis

- Comprehensive Information: Data scraping facilitates the retrieval of large volumes of data across multiple websites, providing a comprehensive range of information for analysis purposes.

- Time Efficiency: With automated scraping techniques, the data gathering process becomes expedited, saving valuable time in manually collecting data.

- Accuracy: By automating the process, data scraping reduces the chances of human errors that are prone to occur during manual data collection.

- Scalability: Data scraping allows for scalability in collecting and analyzing vast amounts of data, providing opportunities for deeper insights and better decision-making.

- Competitive Advantage: By harnessing the power of data scraping, businesses gain valuable insights that offer a competitive edge in the market.

Key Factors for Effective Data Scraping

Reliable sources for data scraping

When conducting data scraping, it is essential to identify and select reliable sources that provide accurate and relevant data. Trusted websites and authoritative sources enhance the reliability of the obtained data, ultimately ensuring the quality of the analysis.

Ethical considerations when scraping data

While data scraping can be a valuable tool, it is crucial to navigate the ethical implications surrounding this practice. Respecting privacy laws, terms of service, and copyrights of websites is vital to maintain ethical boundaries during data scraping. Staying within legal norms and regulations helps establish a trustworthy and responsible approach to data scraping.

Data quality assurance during the scraping process

To maintain data integrity and reliability, implementing data quality assurance measures is essential during the scraping process. This includes verifying the accuracy, completeness, and consistency of scraped data, ensuring any errors or discrepancies are promptly addressed.

Techniques to handle complex data scraping scenarios

In some instances, data scraping may pose challenges in complex scenarios. Techniques such as handling AJAX-loaded content, overcoming IP blocking, and solving CAPTCHA challenges are crucial to effectively navigate such roadblocks. Adopting advanced scraping techniques and utilizing APIs and automation can streamline the process and improve efficiency.

Exploring Professional Data Scraping Services

Characteristics and advantages of professional data scraping services

Professional data scraping services offer numerous advantages over manual scraping. These services employ advanced technologies, expertise, and experience to maximize data extraction efficiency. They ensure a systematic and reliable approach to gathering data, guaranteeing accurate and relevant datasets for analysis. The expertise of professional data scraping services also helps in handling complex scraping scenarios and delivering high-quality results.

Popular data scraping service providers in the market

In the market, several reputable data scraping service providers offer their expertise to assist businesses in achieving efficient data analysis. These providers, such as XYZ Company and ABC Solutions, have a proven track record of successful data scraping projects and offer customized solutions tailored to specific business needs.

Choosing the Right Data Scraping Service

Assessing your data scraping needs

Before choosing a data scraping service provider, it is crucial to assess your specific data scraping needs. Determining the quantity and variety of data required, as well as the complexity of scraping scenarios, will assist in finding a service provider that aligns with your requirements.

Evaluating service providers based on expertise and experience

Expertise and experience are vital factors in selecting the right data scraping service provider. Evaluating their track record, client testimonials, and expertise in handling various scraping challenges will give insights into their capabilities. Experienced service providers are more likely to deliver efficient and accurate results.

Considering pricing models and reliability of the service

Pricing models play a significant role in determining the feasibility of hiring a data scraping service provider. It is essential to evaluate whether the pricing aligns with your budget and consider any additional costs for customization or maintenance. Additionally, assessing the reliability of the service through reviews or referrals will help in making an informed decision.

Best Practices for Data Scraping

Identifying target websites for scraping

To optimize data scraping efficiency, it is crucial to strategically identify target websites that possess the desired data. Focusing on trusted and relevant websites increases the accuracy and reliability of the scraped data, ensuring high-quality analysis.

Optimizing scraping efficiency through automation and APIs

Automation and utilizing Application Programming Interfaces (APIs) can significantly enhance data scraping efficiency. Implementing automated tools and leveraging APIs allows for streamlined and faster extraction, reducing manual effort and increasing productivity.

Ensuring legality and adherence to terms of service

Adhering to legality and terms of service is paramount when conducting data scraping. Reviewing the terms of service of target websites and seeking permission or abiding by their guidelines ensures ethical scraping practices. Respecting website owners’ rights and acquiring necessary permissions fosters trust and prevents legal repercussions.

Handling Scraped Data

Cleaning and preprocessing scraped data

Scraped data often requires cleaning and preprocessing to ensure its usability in analysis. Removing duplicate entries, correcting formatting inconsistencies, and standardizing data are crucial steps in preparing scraped data for meaningful analysis.

Organizing and storing scraped data effectively

Organizing and storing scraped data in a structured manner is essential for easy retrieval and analysis. Employing data management techniques and utilizing database systems or cloud storage services can enhance accessibility and facilitate efficient analysis.

Unleashing the Power of Scraped Data in Analysis

Integrating scraped data with other datasets

To gain comprehensive insights, integrating scraped data with existing datasets is crucial. This integration allows for a holistic view, revealing correlations, trends, and patterns that may not be apparent within individual datasets alone. Merging scraped data with internal databases or other external sources enriches the analysis.

Leveraging visualization tools for comprehensive data analysis

Visualizing scraped data through charts, graphs, and interactive tools enables a better understanding of the patterns, relationships, and trends within the data. Visualization aids in presenting complex information in a clear and concise manner, enhancing the effectiveness of data analysis.

Applying machine learning techniques on scraped data

Leveraging machine learning algorithms on scraped data opens avenues for predictive analytics and advanced analysis. By training models on scraped data, patterns, and insights can be extracted, allowing for proactive decision-making based on predictive trends.

Potential Challenges and Solutions

Overcoming IP blocking and captcha challenges

Some websites enforce IP blocking or present CAPTCHA challenges to prevent data scraping. To overcome these obstacles, utilizing proxy servers to change IP addresses or employing CAPTCHA-solving mechanisms can ensure continuous and uninterrupted data scraping.

Dealing with dynamic websites and AJAX-loaded content

Dynamic websites and content loaded through Asynchronous JavaScript and XML (AJAX) present challenges in data scraping. Implementing techniques such as headless browsers, JavaScript manipulation, or utilizing APIs specifically designed to handle dynamic content can enable effective scraping in such scenarios.

Ethical Considerations in Data Scraping

Respecting privacy and copyright issues

Respecting privacy laws and copyright regulations is vital when scraping data. It is essential to ensure that the scraped data does not include personally identifiable information without appropriate consent. Additionally, adhering to copyright laws and permissions when using scraped content prevents legal complications.

Adhering to legal norms and regulations

Data scraping should always abide by legal norms and regulations. Familiarizing oneself with national and international laws governing data collection, privacy, and intellectual property rights is essential to maintain ethical standards. Compliance with legal requirements ensures ethical data scraping practices.

Success Stories: Real-world Applications of Scraped Data Analysis

Companies across various industries have successfully leveraged data scraping for achieving remarkable outcomes. For example, in the e-commerce sector, data scraping enables tracking competitor prices and inventory, facilitating dynamic pricing strategies. Moreover, media companies utilize data scraping to analyze sentiment analysis from social media platforms, enabling them to tailor content based on public opinions.

Future Trends in Data Scraping and Analysis

As technology progresses, data scraping and analysis techniques are poised to witness exciting advancements. Predictive analytics driven by data scraping is expected to revolutionize decision-making processes. By harnessing scraped datasets and utilizing advanced machine learning algorithms, predictive models will empower businesses to forecast trends and make proactive decisions based on data-backed insights.

Summary

Data scraping serves as a critical tool in maximizing data analysis efficiency. By extracting relevant information from various sources, data scraping ensures comprehensive datasets for analysis, saving time and improving accuracy. Ethical considerations, professional services, and best practices further enhance the effectiveness of data scraping. With the right approach and adherence to legal and ethical boundaries, businesses can unlock the power of scraped data to gain valuable insights and achieve success in today’s data-driven world.

FAQs

-

What legal aspects should I consider before scraping data?

Before scraping data, it is essential to understand and adhere to national and international laws governing data collection, privacy, and intellectual property rights. Compliance with legal requirements ensures ethical and responsible scraping practices.

-

Can I scrape data from any website without permission?

Scraping data from websites without permission may violate legal and ethical boundaries. It is crucial to review the target website’s terms of service and acquire necessary permissions to ensure ethical scraping practices.

-

How can I ensure the quality of the scraped data?

To ensure the quality of the scraped data, implementing data quality assurance measures is crucial. This includes verifying the accuracy, completeness, and consistency of the data and promptly addressing any errors or discrepancies.

-

Are there any specific programming languages/tools for data scraping?

Several programming languages, such as Python and R, offer libraries and frameworks specifically designed for data scraping. Additionally, there are numerous data scraping tools and software available that streamline the scraping process.

-

Is data scraping only applicable to the tech industry?

Data scraping is applicable to various industries beyond the tech sector. From e-commerce to media and finance, businesses across domains utilize data scraping to gain valuable insights and make informed decisions.

-

Can I use data scraping for competitor analysis?

Absolutely! Data scraping is widely employed for competitor analysis. By monitoring competitor prices, product information, and customer reviews, businesses can gain a competitive edge by devising effective strategies.

-

What precautions can I take to avoid being blocked while scraping data?

To avoid being blocked while scraping data, consider utilizing proxy servers to change IP addresses and rotating through multiple IP addresses. Employing CAPTCHA-solving mechanisms or opting for headless browsers can also help overcome blockades.

-

How can I differentiate between reputable and unreliable data scraping service providers?

To differentiate between reputable and unreliable data scraping service providers, consider evaluating their track record, client testimonials, and expertise in handling various scraping challenges. Seeking referrals and checking reviews can provide valuable insights into their reliability and credibility.